Enterprise LLM spending more than doubled — from $3.5 billion to $8.4 billion — in just one year. Most of that money was wasted. Here is the architecture that is quietly changing how serious AI products are built.

There is a pattern that keeps showing up inside AI startups that are quietly burning through their runway: they picked a model — GPT-4, Claude Opus, Gemini Ultra — got it working, and then scaled it. Simple query, complex query, customer FAQ, legal reasoning — all routed to the same premium endpoint, at the same premium price, regardless of what the task actually demands.

On a whiteboard, this looks elegant. In a billing dashboard at 10 million requests per month, it looks catastrophic.

The teams that figured this out first — at Atlassian, Walmart, Salesforce, and a handful of well-funded AI-native startups — quietly moved to something different. They stopped asking which model is best and started asking which model is best for this specific task. The result was not a modest efficiency gain. It was a structural shift in what AI infrastructure looks like at scale.

This piece breaks down that shift — what it is, why the numbers are so dramatic, what real implementations look like, and why it is an especially urgent conversation for AI founders building in India right now.

The Fundamental Problem: Flat Pricing Meets Variable Complexity

Every LLM API charges per token — input tokens, output tokens, sometimes both. The problem is that API complexity does not. Your billing treats a user asking “What is your refund policy?” the same as a user asking for a multi-step legal analysis of a cross-border SaaS contract. Both route to your GPT-4 endpoint. Both burn premium compute.

This is the inefficiency hiding in plain sight. The vast majority of real-world queries are not complex. Research consistently shows that a large share of user requests across customer support, content tools, and enterprise assistants are routine — FAQs, summarisations, classifications, form fills. Yet these low-complexity requests, sent to frontier models, are priced at the same rate as the most demanding reasoning tasks those models were actually built for.

“The cheapest API call is the one you don’t make. After that, it’s the one routed to the right model.”Core Principle of Production LLM Cost Engineering, 2026

The price gap between model tiers in 2026 makes this painfully concrete. Premium frontier models — GPT-4 class, Claude Opus — are currently priced in the range of $15 to $60 per million tokens. Lightweight models like GPT-4o mini or Claude Haiku sit below $1 per million tokens on input. That is a 15× to 60× cost differential on the same task, and for simple queries, the output quality difference is negligible.

Using a frontier model for everything is, to use a blunt analogy, like dispatching a cardiologist to hand out aspirin at a pharmacy counter. The capability is there. The cost is unjustifiable.

What Multi-Model Routing Actually Means

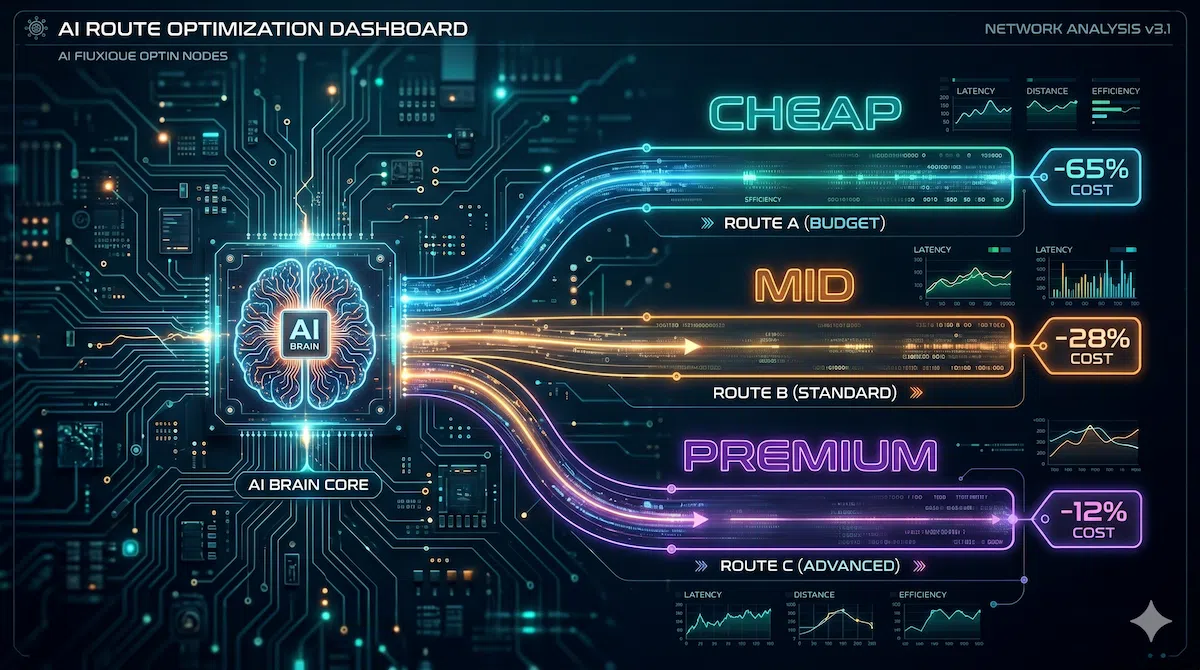

A multi-model strategy does not mean randomly switching between AI providers. It means building an intelligent layer — a model router — that sits between your application and your LLM providers, evaluates each incoming request in real time, and dispatches it to the most cost-efficient model capable of handling it adequately.

Think of it less like a choice between tools and more like an air traffic control system. Every flight does not use the same runway, the same gate, or the same aircraft. Traffic is routed dynamically based on destination, load, and priority. Model routing applies that same logic to AI inference.

- Complexity classifier

- Semantic cache check

- Cost threshold evaluation

- Fallback / failover logic

- FAQs

- Classification

- Simple lookup

- Content generation

- Summarisation

- Moderate reasoning

- Complex reasoning

- Code generation

- Deep analysis

The router makes its decision in milliseconds — typically under 100ms of added latency — and the gains are dramatic. Research from hybrid routing systems shows that sending basic requests through lighter models while reserving frontier models for complex reasoning achieves a 37–46% reduction in overall LLM usage alongside 32–38% faster responses on simple queries.

The Research Is Not Theoretical Anymore

The headline number that the AI infrastructure community keeps referencing comes from UC Berkeley’s Sky Computing Lab. Their RouteLLM framework — published at ICLR 2025 by researchers from Berkeley, Anyscale, and Canva — demonstrated cost reductions of over 85% on the MT Bench evaluation while maintaining 95% of GPT-4’s performance quality. On other benchmarks, cost reductions ranged from 35% on GSM8K to 45% on MMLU, with the router requiring only 14% of queries to be sent to the expensive model when augmented training data was used.

This is not marketing from a vendor. It is peer-reviewed research. And it lines up with what enterprise practitioners are reporting from production deployments.

How a Production Router Works — The Three Layers

There is a common misconception that model routing means writing a few if-else conditions to check whether a message contains certain keywords and send it to one model or another. That is rule-based routing, and it is only the beginning. A production-grade system typically operates in three layered strategies.

Layer 1 — Rule-Based Routing

The simplest and fastest approach. You define explicit patterns: FAQ questions go to the lightweight model, requests containing more than 2,000 tokens go to a model with a long context window, code-generation prompts go to the model optimised for synthesis. In practice, rule-based routing handles the clear-cut cases — FAQ questions to GPT-4o-mini, analytical requests to Claude Sonnet — and is fast and reliable with no added inference cost. It works well when your traffic distribution is predictable and your task taxonomy is stable.

Layer 2 — LLM Classifier Routing

For ambiguous queries that rules cannot cleanly categorise, a second approach uses a small, fast classifier model to evaluate incoming prompts and assign a complexity tier before routing. The cost of this classification step is effectively negligible — at roughly $0.0001 per request, a classifier handling 3,000 monthly interactions adds approximately $0.30 to the monthly bill. The upside is a system that can interpret natural language nuance, handle edge cases, and adapt to shifting traffic patterns without code changes.

Layer 3 — Learned / Adaptive Routing

The most sophisticated tier uses machine learning to train routing policies on historical preference data. This is what RouteLLM demonstrated at Berkeley: trained routers that learn, from real interaction data, which queries genuinely require a frontier model and which can be handled adequately by a cheaper one. A 2026 framework called GreenServ extended this further, using a multi-armed bandit approach to learn adaptive routing policies online without extensive offline calibration — achieving a 22% accuracy improvement and a 31% energy reduction versus random routing across a pool of 16 open-access LLMs.

The three routing strategies are not mutually exclusive. The best production setups combine all three: rules for clear-cut cases, a classifier for ambiguous queries, and adaptive learning for continuous optimisation. The entire routing infrastructure can be implemented in 50–100 lines of code in LangChain, Semantic Kernel, or a custom Python stack.

Task-to-Model Mapping: A Practical Framework

One of the most immediately actionable outputs from this architecture is a task allocation framework — a structured mapping of your workload types to appropriate model tiers. Here is a generalised version based on 2026 production deployments.

| Task Type | Model Tier | Example Models | Typical Volume |

|---|---|---|---|

| FAQs, intent classification, short-form responses, form filling | Cheap | GPT-4o mini, Claude Haiku, Gemini Flash | 60–75% of traffic |

| Content generation, email drafts, summarisation, CRM operations | Mid-Tier | Claude Sonnet, GPT-4o, Gemini Pro | 15–25% of traffic |

| Complex reasoning, multi-step analysis, long-context documents, advanced code | Premium | Claude Opus, GPT-4, Gemini Ultra | 5–15% of traffic |

| Repeated/similar queries (semantic caching layer) | Cache Hit | No model call — cached response served | 20–40% of total requests |

The semantic caching layer deserves particular attention because it represents cost elimination rather than cost reduction. When a user asks “What is your refund policy?” and another asks “How do I get a refund?” — these are semantically equivalent queries. A caching layer recognises this and returns the stored response without making any API call. Production systems report cache hit rates of 20–40% across high-volume applications. One customer support system reduced its overall LLM costs by 69% through caching alone, before any model routing was applied.

Who Is Already Doing This — Enterprise Adoption in 2026

Multi-model deployment is no longer an experimental approach. Several large organisations confirmed as production users include Atlassian, which runs over 20 different models across its product suite; Salesforce; Microsoft, which tests algorithms across Anthropic, Meta, DeepSeek, and xAI for Copilot; and Walmart, which introduced a retail-specific LLM trained on its own data and combines it with other models on AWS Bedrock.

The open-source infrastructure supporting this is mature. RouteLLM from UC Berkeley is publicly available. LiteLLM provides a unified API across 100+ providers. Bifrost, an open-source gateway from Maxim AI, offers semantic caching, automatic failover, load balancing, and full observability with a measured overhead of just 11 microseconds per request at 5,000 requests per second — effectively zero in the context of API calls that take hundreds of milliseconds.

The Real Cost Is Resilience, Not Just Efficiency

There is a dimension of multi-model architecture that goes beyond cost optimisation: operational resilience. When OpenAI experienced service disruptions in 2025, applications without routing went down. Applications with routing frameworks automatically failed over to Anthropic, Google, or other configured providers with zero user-facing downtime.

Single-model dependency is not just a financial risk. It is an architectural fragility. If your product lives on one provider’s uptime, your SLA is a function of their reliability, not yours. A routing layer with configured fallbacks changes this equation entirely — making reliability a property of your system, not a loan from your vendor.

“The winners in AI won’t be the teams with the best model. They’ll be the teams with the best system around the model.”A Principle Increasingly Evident in 2026 Production AI Deployments

The Hidden Cost Multiplier for Indian AI Startups

There is a structural cost disadvantage that every Indian AI founder carries that their counterparts in the US do not. Revenue is earned in rupees. LLM API costs are charged in dollars. By the time an Indian startup pays its OpenAI or Anthropic invoice, it has absorbed an 18% GST on software imports plus a 3–5% forex conversion markup on the transaction — meaning the real cost of an API call is roughly 21–23% higher than the listed price before a single line of code runs.

For a startup burning ₹20 lakh per month on LLM APIs, a 50% routing-driven cost reduction is not an optimisation exercise. It is the difference between a viable unit economics story and an unsustainable burn rate. India’s AI ecosystem — which in 2026 received an additional ₹10,372 crore in the Union Budget and is home to sovereign model initiatives from Sarvam AI and BharatGen — is maturing rapidly. But the cost discipline required to build at Indian price points with global infrastructure demands architectural rigour from day one.

The good news: open-source routing tools like RouteLLM and LiteLLM have no licensing cost. The engineering investment to implement basic routing is measured in hours, not sprints. And Indian AI teams, known for their infrastructure efficiency, are arguably better positioned than most to execute this kind of cost engineering well.

When Single-Model Still Makes Sense

Intellectual honesty requires acknowledging that multi-model architecture is not always the right answer. If you are a pre-seed startup with under 10,000 monthly requests, the operational overhead of managing multiple API integrations, a routing layer, and cross-provider observability is almost certainly not worth it. Start with one model. Validate product-market fit. Optimise infrastructure after the demand exists to justify it.

The threshold where routing starts making financial sense varies, but as a practical rule: when your monthly LLM bill crosses $2,000–$3,000, the ROI on basic routing implementation typically becomes compelling within 60 days. Above $10,000 per month, it is difficult to construct an argument for not doing it.

The Real Challenges — And Why They Are Manageable

A complete picture requires naming the genuine trade-offs. Multi-model architecture adds complexity: multiple API credentials, routing logic to maintain, potential output inconsistency across model tiers, and slightly more elaborate debugging when something goes wrong. These are real costs. The question is whether they are proportionate to the savings at your scale — and above a certain request volume, they almost universally are.

The latency concern — that routing adds meaningful delay — is largely resolved in modern gateway implementations. As noted, Bifrost adds 11 microseconds of overhead at high throughput. The classifier-based routing step adds roughly 200ms for ambiguous queries, which in most product contexts is imperceptible to users.

Quality consistency is the subtler challenge. Different model tiers produce different outputs, and maintaining a coherent user experience across tier boundaries requires careful calibration of routing thresholds and, in some cases, output normalisation. This is engineering work worth doing thoughtfully, not skipping.

Decision Framework: Where Are You?

| Your Situation | Recommended Approach |

|---|---|

| Pre-product / <10K requests/month | Single model. Focus on product validation. Routing adds unnecessary complexity at this stage. |

| $500–$2,000/month LLM spend | Implement semantic caching first. Low effort, high return — often 20–40% reduction before any routing. |

| $2,000–$10,000/month LLM spend | Add rule-based routing for your top 3–4 task categories. 50–100 lines of code. Strong ROI within 60 days. |

| >$10,000/month LLM spend | Full three-layer routing architecture with an AI gateway. Consider RouteLLM or Bifrost. Budget 2–4 engineering weeks. |

| Mission-critical reliability requirements | Multi-model routing is mandatory regardless of cost profile. Single-provider dependency is an unacceptable SLA risk. |

The Bigger Picture: AI Is Becoming Infrastructure

The shift toward multi-model architecture reflects something more fundamental than a cost optimisation tactic. It reflects AI’s maturation from an experimental technology into production infrastructure — and infrastructure is engineered, not assembled by vibes.

In 2022 and 2023, the question was whether AI could do the task at all. In 2024, the question became which model did it best. In 2026, the question is increasingly how to build reliable, cost-efficient, resilient systems that use AI as a component — and that requires thinking like an infrastructure engineer, not just an AI enthusiast.

The teams building the most durable AI products in 2026 are not necessarily using the most powerful models. They are using the right models, in the right configuration, with the right cost controls — and they are building systems where intelligence is allocated efficiently rather than lavished uniformly.

That discipline — treating AI inference as a managed resource rather than a fire-and-forget API call — is what separates products that scale from those that plateau when the economics refuse to co-operate.

What Every AI Founder Should Walk Away With

- Enterprise LLM spend doubled to $8.4B in one year. Most of it was wasted on premium models handling routine tasks.

- UC Berkeley’s RouteLLM demonstrated 85% cost reduction at 95% quality — this is peer-reviewed, production-tested evidence, not vendor marketing.

- A production routing system has three layers: rule-based (fast, clear cases), classifier-based (ambiguous queries), and learned/adaptive (ongoing optimisation).

- Semantic caching alone — before any routing — eliminates 20–40% of API calls by serving cached responses for semantically similar queries.

- For Indian AI startups, the real cost of every API call is ~21–23% higher than the listed price after GST and forex. Cost efficiency is not optional — it is structural survival.

- Multi-model architecture also solves for resilience: when providers have outages, routed systems stay online. Single-model dependency is both a financial and operational risk.

- The implementation threshold: above $2,000/month in LLM spend, routing ROI is typically compelling within 60 days. Above $10,000/month, it is difficult to justify not doing it.

FAQs

What is multi-model LLM routing and how does it work?

Multi-model LLM routing is an intelligent layer that sits between your application and LLM providers, automatically directing each request to the most cost-efficient model capable of handling it. Simple queries like FAQs go to cheap models (~$0.15/M tokens), moderate tasks go to mid-tier models, and only complex reasoning or code generation reaches premium models. The router decides in under 100ms with negligible added latency.

How much can multi-model LLM routing actually reduce AI costs?

Results vary by workload, but the numbers are significant. UC Berkeley’s RouteLLM framework demonstrated up to 85% cost reduction while maintaining 95% of GPT-4’s output quality — published at ICLR 2025. In production, Amazon Bedrock reported 60% savings, and one customer support platform cut costs by 69% through semantic caching alone, before any routing was applied.

When should an AI startup start implementing multi-model LLM routing?

A practical rule: once your monthly LLM bill crosses $2,000, routing ROI typically becomes compelling within 60 days. Below that, a single model is simpler and sufficient. Start with semantic caching first — it eliminates 20–40% of API calls with minimal engineering effort — then layer in rule-based routing for your top task categories as traffic grows.