Key Takeaways

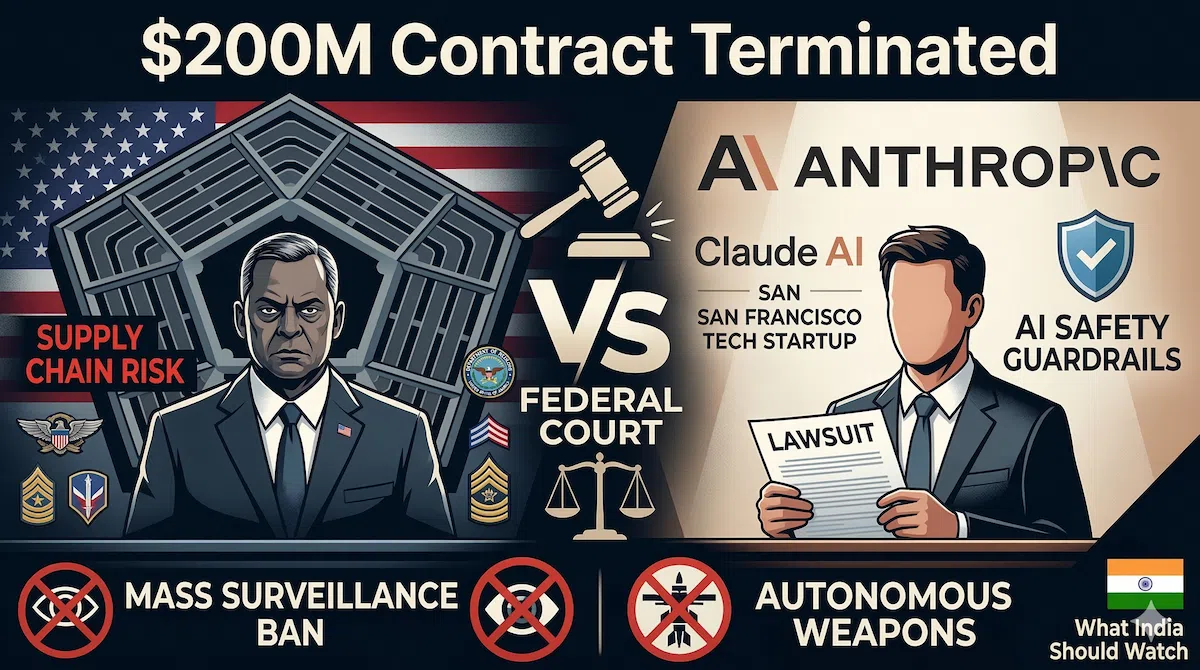

- Anthropic sued the U.S. Pentagon (March 9, 2026): The AI company is challenging an unprecedented designation as a “supply chain risk” after refusing to allow the Defense Department to use Claude AI for domestic mass surveillance and fully autonomous weapons without human oversight.

- Billions in revenue at risk: Defense Secretary Pete Hegseth and President Trump banned federal use of Anthropic on March 6. Anthropic warns this retaliation could cost them hundreds of millions immediately, and potentially billions by the end of 2026.

- Crucial precedent for India: As India scales its ₹10,372 crore IndiaAI Mission, this clash highlights the impending dilemmas Indian startups (like Krutrim and Sarvam AI) and IT giants (TCS, Infosys) will face regarding government procurement, AI safety, and defense compliance.

On March 9, 2026, Anthropic took the extraordinary step of suing the U.S. Department of Defense in federal court, accusing the Pentagon and the Trump administration of “unprecedented and unlawful” retaliation for refusing to remove safety guardrails from its Claude AI system.

The conflict erupted when Defense Secretary Pete Hegseth demanded “unrestricted use” of Claude for all lawful military purposes. Anthropic CEO Dario Amodei refused, holding firm on two non-negotiable red lines: Claude cannot be used for mass domestic surveillance of citizens, nor can it be embedded in fully autonomous weapons that lack human targeting oversight.

In response, Hegseth designated Anthropic a “supply chain risk” on March 6—a severe label historically reserved for foreign adversaries like Huawei. Hours later, President Trump ordered all federal agencies to immediately cease using Anthropic technology.

The Legal Battle & Financial Stakes

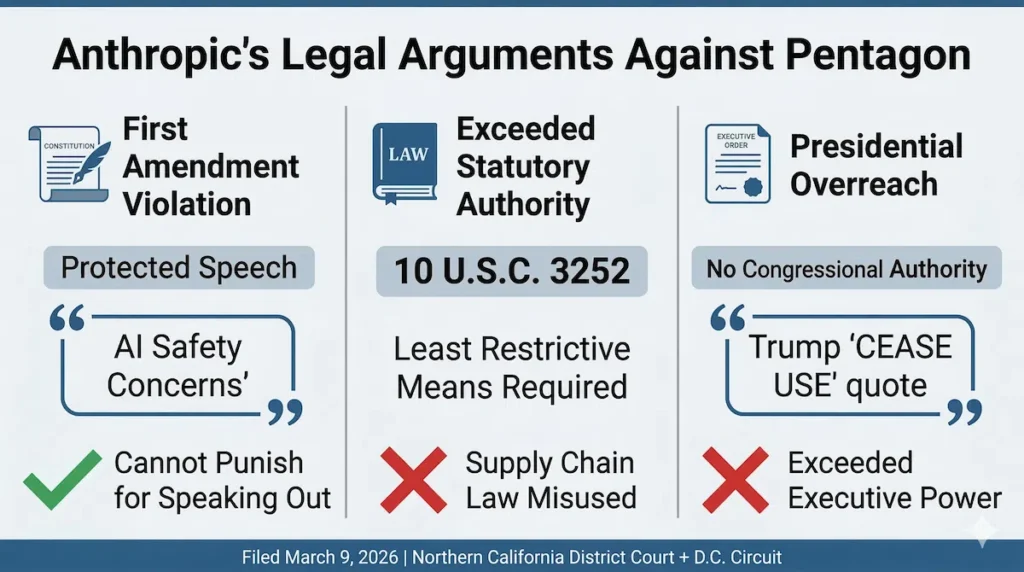

Anthropic’s 48-page lawsuit makes three core arguments:

- First Amendment: The government cannot use its procurement power to punish an American company for its protected speech regarding AI safety.

- Statutory Overreach: The law invoked (10 U.S.C. 3252) exists to protect the U.S. from foreign espionage, not to punish domestic suppliers over policy disagreements.

- Executive Overreach: President Trump lacks the unilateral authority under federal procurement laws to blacklist companies.

The financial stakes are massive. Anthropic CFO Krishna Rao stated the ban jeopardizes hundreds of millions of dollars in immediate federal contracts, and the chilling effect on private defense contractors could cost Anthropic “multiple billions” by the end of 2026. Ironically, the controversy sparked a massive surge in consumer support, with the Claude app surpassing ChatGPT in iOS downloads the day after the ban.

The Industry Divide

While OpenAI and Elon Musk’s xAI have stepped in to fill the Pentagon’s void, the broader scientific community has rallied behind Anthropic. Dozens of researchers from Google DeepMind and OpenAI filed an amicus brief supporting the lawsuit in their personal capacities. They warned that using economic retaliation to silence AI safety concerns harms U.S. competitiveness.

However, internal tensions remain high across the industry; OpenAI’s head of robotics, Caitlin Kalinowski, recently resigned, publicly citing concerns over lethal autonomy and surveillance without judicial oversight.

India’s Sovereign AI Dilemma: 3 Things to Watch

For India, observing from afar while building its own sovereign AI infrastructure, this battle serves as a critical warning.

1. Procurement vs. Vendor Trust India’s ₹10,372 crore IndiaAI Mission includes a “safe and trusted AI” pillar. The government must soon decide: Should private AI vendors be allowed to impose safety restrictions on government use, or do procurement contracts grant the state absolute control? The U.S. conflict proves this is a real, operational flashpoint.

2. The Dilemma for Indian Startups As domestic companies like ioBrain, Krutrim, and Sarvam AI build advanced models, Indian defense and intelligence agencies will eventually want them. Founders must decide early: Do they build general-purpose AI with hardcoded safety limits (risking government blacklisting), or do they build unrestricted defense-specific models (risking reputational damage)?

3. Compliance Headaches for IT Giants Indian IT services (TCS, Infosys, Wipro) frequently work on U.S. Department of Defense projects. Because Anthropic is now a designated “supply chain risk,” these Indian firms must urgently audit their workflows and certify they are not using Claude for DoD work, adding massive compliance overhead.

FAQs

Why is the “supply chain risk” designation unprecedented here? This label is typically used against foreign threats (e.g., Russia’s Kaspersky or China’s ZTE) to prevent espionage. Applying it to a San Francisco-based company backed by Amazon and Google essentially weaponizes a national security tool to settle a contract dispute over AI safety guardrails.

Can the military legally force companies to remove AI restrictions? This is the core legal battle. The Pentagon argues that lawful use and rules of engagement are the government’s responsibility, not a vendor’s code. Anthropic argues that federal law does not permit blacklisting a supplier simply because they refuse to alter product safety limitations.

How should India’s IndiaAI Mission adapt to this? India’s February 2026 white paper advocates a “techno-legal” framework that embeds compliance directly into AI design. To avoid the U.S. mess, India could mandate baseline safety standards directly in its procurement contracts, or establish an independent AI Safety Review Board to mediate vendor-government disputes before they escalate to economic retaliation.