Key Takeaways

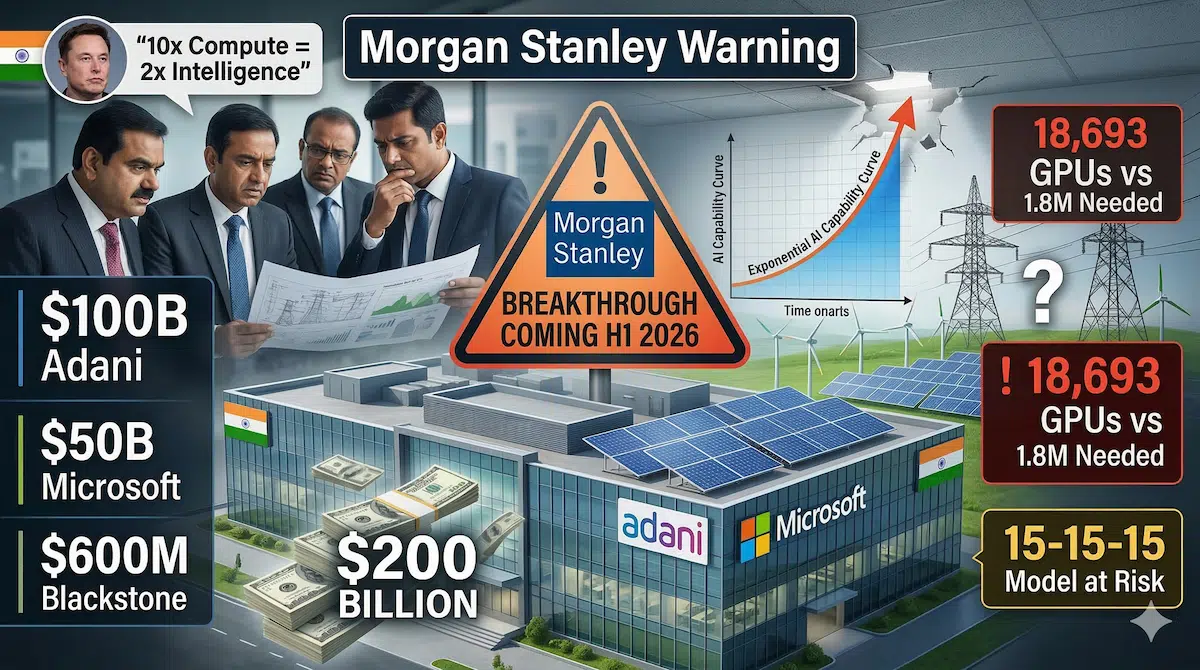

- Morgan Stanley warns major AI breakthrough coming H1 2026 will create “deflationary force” that world infrastructure can’t support — Investment bank’s March 14 research note projects imminent capability jump requiring massive data center expansion, but existing 15-year lease models with 15% yields and $15/watt valuations assume stable demand that breakthrough technologies historically disrupt within months

- India committed over $200 billion at AI Impact Summit last week despite Morgan Stanley’s infrastructure strain warning — Adani pledged $100B renewable AI data centers, Microsoft announced $50B India expansion, Blackstone committed $600M, and government allocated $1.1B VC fund—all betting on sustained AI compute demand Morgan Stanley says may face “deflationary pressure” as breakthroughs reduce compute needs

- Elon Musk’s “10x compute = 2x intelligence” scaling law means India’s 18,693 GPUs fall catastrophically short — IndiaAI Mission’s current compute infrastructure (13,000 Nvidia H100s, 1,500 H200s) provides baseline capability, but achieving even 4x intelligence improvement requires 100x compute increase to 1.8 million GPUs—a ₹2.4 lakh crore ($30B) investment India hasn’t budgeted for despite $200B data center commitments focused on infrastructure, not chips

On March 14, 2026, Morgan Stanley published a research note that should unsettle everyone who cheered India’s $200+ billion AI infrastructure commitments announced just days earlier at the India AI Impact Summit. The investment bank’s Technology, Media & Telecom Research team warned that a major AI breakthrough—likely arriving within the next six months—will fundamentally disrupt the economic assumptions underlying the global data center boom India is rushing to join.

The timing is stark. India’s government, Adani Group, Microsoft, and Blackstone collectively pledged over $200 billion for AI infrastructure development between March 5-11, 2026. Morgan Stanley’s note arrived March 14, essentially arguing the world isn’t ready for what’s coming, and massive capital commitments based on linear AI improvement assumptions may face rapid obsolescence.

The core tension: India is building infrastructure for today’s AI capabilities while Morgan Stanley warns tomorrow’s AI will render much of that infrastructure economically unviable or require capabilities India isn’t planning to provide.

The “15-15-15” Model India Is Betting On

Morgan Stanley’s analysts describe the current data center investment model as “15-15-15”: 15-year lease commitments from hyperscalers, 15% targeted yields for investors, and $15 per watt valuations for data center capacity. This model assumes stable, predictable demand growth for AI compute over the next decade and a half.

India’s $200+ billion infrastructure wave follows this exact playbook. Adani’s $100 billion renewable AI data center pledge spans multiple facilities designed for 15-20 year operational lifespans. Microsoft’s $50 billion India expansion includes long-term cloud infrastructure commitments. Blackstone’s $600 million investment targets data center real estate with similar multi-decade horizons.

The problem, according to Morgan Stanley, is that major AI breakthroughs historically compress timelines dramatically. The bank’s analysts point to how transformer architectures (introduced 2017) reached mainstream deployment within 18-24 months, not 15 years. GPT-3 to GPT-4 represented a capability jump that arrived in 16 months, not a decade. DeepSeek R1’s 94% cost reduction versus ChatGPT happened in weeks once the model released, not gradually over years.

If the anticipated H1 2026 breakthrough delivers similar discontinuous improvement—and Morgan Stanley’s note suggests it will—then 15-year assumptions underlying India’s infrastructure investments may face reality checks within 15 months.

What “Breakthrough” Actually Means: Compute Implications

Elon Musk articulated the scaling challenge facing India and every other country at a recent AI conference: “10x more compute gets you about 2x more intelligence.” This logarithmic relationship means achieving meaningful capability improvements requires exponentially more computational resources.

India’s current IndiaAI Mission infrastructure comprises 18,693 GPUs: 13,000 Nvidia H100s, 1,500 Nvidia H200s, and approximately 4,000 additional units from various providers. This represents baseline capability for training modest AI models and running inference at moderate scale.

But Morgan Stanley’s breakthrough scenario implies reaching GPT-5 or Claude 4 level capabilities, which require orders of magnitude more compute. If India wants to 4x current AI intelligence levels using the Musk scaling rule, it needs 100x current compute: 1.8 million GPUs. At approximately ₹13 lakh ($16,000) per H100 GPU, that’s ₹2.4 lakh crore ($30 billion) just for chips—before factoring in data centers, power infrastructure, cooling systems, and networking.

India’s $200 billion commitments focus heavily on data center construction, renewable energy generation, and real estate development. GPU procurement budgets appear minimal by comparison. The IndiaAI Mission allocated ₹10,372 crore ($1.25 billion) total across seven pillars, with compute infrastructure receiving perhaps 30-40% of that allocation—roughly ₹3,000-4,000 crore ($360-480 million). This funds the existing 18,693 GPUs but falls catastrophically short of the 1.8 million GPU requirement implied by serious capability scaling.

The disconnect: India is building massive AI infrastructure without proportional investment in the computational hardware that actually generates AI capabilities.

The Deflationary Job Impact India Isn’t Preparing For

Morgan Stanley’s note characterizes the coming AI breakthrough as a “deflationary force”—meaning it will reduce costs, compress margins, and eliminate jobs faster than new roles emerge. For India, this projection conflicts directly with government narratives around AI job creation.

India’s Finance Minister Nirmala Sitharaman, announcing the ₹10,372 crore IndiaAI Mission, emphasized AI skill development and job creation. The assumption: AI generates new technology roles faster than it eliminates existing positions. Morgan Stanley’s analysis suggests the opposite for breakthrough-level AI.

The bank’s economists point to historical technology disruptions. When agricultural mechanization arrived, it didn’t create equal farm jobs—it eliminated 90% of agricultural employment over several decades while creating far fewer manufacturing jobs. Industrial automation reduced manufacturing employment as a percentage of workforce even as absolute manufacturing output grew. AI breakthrough, Morgan Stanley argues, follows this pattern but compressed into years, not decades.

For India specifically, the vulnerability concentrates in sectors where AI substitution is already demonstrable. Business process outsourcing employs 5.4 million Indians; AI voice agents are already replacing 40-50% of entry-level customer service roles according to recent industry projections. Information technology services export $220+ billion annually; AI coding assistants threaten junior developer positions that constitute India’s talent pipeline. Data entry, documentation, basic analysis—all roles where India built comparative advantage—face AI substitution within 24-36 months if breakthrough capabilities arrive as Morgan Stanley projects.

The “deflationary” characterization matters economically. If AI reduces labor costs for services India exports, then India’s pricing power collapses even if productivity improves. A 10x productivity improvement from AI means little if global clients pay 90% less because AI eliminated scarcity value of human labor.

India’s policy response—heavy infrastructure investment, modest retraining budgets—doesn’t address deflationary scenarios. Infrastructure assumes growing demand for Indian AI services. Deflation means shrinking demand regardless of infrastructure quality.

Power Grid Reality Versus Renewable Promises

Adani’s $100 billion pledge emphasized renewable energy-powered AI data centers, positioning India as a green AI infrastructure destination. The announcement generated positive coverage highlighting climate-conscious technology development.

Morgan Stanley’s note implicitly questions whether renewable power commitments can meet breakthrough AI’s energy demands on required timelines. Current data centers consume approximately 1-2 megawatts per 1,000 GPUs. India’s planned 1.8 million GPU requirement (using Musk scaling) demands 1,800-3,600 megawatts of dedicated AI computing power—equivalent to 2-3 large coal power plants or 18-36 large solar farms.

India’s renewable energy sector is growing but faces grid integration challenges. Solar and wind generation require storage or backup power for 24/7 data center operations. Battery storage at data center scale remains expensive and unproven in India. Grid reliability concerns already cause enterprise customers to run diesel generators as backup.

The timeline problem: Adani’s renewable AI data centers require 5-7 years to build from announcement to operation. Morgan Stanley’s breakthrough timeline is 6 months. If capability jumps arrive before infrastructure completes, India either misses the window or must rely on carbon-intensive conventional power—undermining the entire green positioning.

China already operates 50,000+ lights-out factories running AI-optimized manufacturing 24/7. These facilities don’t wait for renewable power—they use whatever electricity is available to maintain competitive advantage. If India insists on renewable-only AI infrastructure while competitors use any power source, India concedes speed to principle.

What India Should Actually Do

Morgan Stanley’s warning doesn’t invalidate India’s AI ambitions, but it demands strategic recalibration.

First, prioritize GPU procurement over data center construction. Infrastructure is useless without computational hardware. Redirect a portion of the $200 billion toward securing GPU allocations from Nvidia, AMD, and domestic chip initiatives. India should negotiate multi-year GPU supply agreements before global shortage emerges post-breakthrough.

Second, accelerate application layer development over infrastructure. India’s competitive advantage isn’t building data centers—it’s building AI applications for Indian languages, problems, and markets. The 20 startups building on Sarvam AI’s voice platform, healthcare AI companies deploying in rural India, agricultural AI serving farmers—these represent India’s actual AI opportunity. Infrastructure serves applications, not vice versa.

Third, prepare workforce transition programs now, not after displacement. If Morgan Stanley’s deflationary scenario materializes, millions of Indians in BPO, IT services, data entry, and documentation face unemployment within 24 months. Retraining programs require 12-18 months to show results. India should start immediately rather than waiting for crisis.

Fourth, build flexible infrastructure that can adapt to breakthrough scenarios. The 15-15-15 model assumes static technology. India should negotiate shorter lease terms, modular designs allowing rapid reconfiguration, and partnership structures that share breakthrough risk between investors and users rather than concentrating risk on infrastructure providers.

Morgan Stanley’s note is a warning, not a prediction. But warnings from investment banks analyzing trillions in infrastructure capital deserve attention from countries betting hundreds of billions on assumptions those banks are questioning.

FAQs

What specific AI breakthrough is Morgan Stanley warning about for H1 2026?

Morgan Stanley’s March 14 research note doesn’t identify a specific technology, but analysts point to several candidates: GPT-5 or Claude 4 level language models with dramatically improved reasoning, multimodal AI combining vision/audio/text seamlessly, or AI agents capable of autonomous complex task completion. The bank’s economists cite pattern recognition from previous disruptions—transformer models (2017), GPT-3 (2020), ChatGPT (2022) each arrived suddenly and compressed adoption timelines from years to months. H1 2026 timing aligns with known development cycles at OpenAI, Anthropic, Google DeepMind, and Chinese labs working on next-generation models. For India, the specific technology matters less than the capability jump: if breakthrough AI reduces compute costs 90%+ like DeepSeek did versus GPT-4, then India’s $200B infrastructure bet assumes demand patterns that may evaporate.

How does India’s $200 billion AI commitment compare to China and the US?

India’s $200B+ commitment (Adani $100B, Microsoft $50B, Blackstone $600M, government $1.1B, others) concentrates on infrastructure—data centers, renewable energy, real estate. China’s recent AI spending focuses on chips and applications: $8.4B deployed into practical AI startups Q1 2026 alone, plus massive state funding for domestic semiconductor production targeting AI chips. The US private sector invested $67B in AI companies in 2025 with $45B+ expected Q1 2026, primarily into model development (OpenAI, Anthropic, xAI) and chip design (Nvidia, AMD). India’s infrastructure-heavy approach differs fundamentally—it’s building the building before buying the machines that go inside. China and US prioritize the machines (GPUs, algorithms, talent) assuming infrastructure follows demand. Morgan Stanley’s warning validates the machines-first approach; breakthrough AI needs compute power and algorithms, not just square footage.

What does Elon Musk’s “10x compute = 2x intelligence” mean for India’s AI strategy?

Musk’s scaling law describes diminishing returns in AI capability improvement: doubling intelligence requires 10x more computational resources (GPUs, training time, energy). India’s current 18,693 GPUs provide baseline capability. Reaching 2x current intelligence needs 186,930 GPUs. Reaching 4x intelligence (approaching GPT-5 level) needs 1.8 million GPUs. At ₹13 lakh per Nvidia H100, that’s ₹2.4 lakh crore ($30B) for chips alone—more than India’s entire $1.25B IndiaAI Mission budget and 15% of the $200B infrastructure commitments. India allocated heavily to data center construction, renewable energy, and real estate, but GPU procurement budgets remain minimal. This creates a mismatch: India is building infrastructure to house AI compute but isn’t buying the compute itself. It’s like constructing massive parking garages without budgeting for cars.

Should India cancel its $200 billion AI infrastructure plans based on Morgan Stanley’s warning?

No, but India should restructure commitments to manage breakthrough risk. Current 15-year lease assumptions and infrastructure-first approach face vulnerability if AI capabilities jump suddenly. India should: (1) Negotiate shorter, more flexible terms—5-7 year initial periods with extension options rather than locked 15-year commitments; (2) Shift more capital toward GPU procurement and chip development instead of just data center construction; (3) Build modular infrastructure allowing rapid reconfiguration if breakthrough changes compute requirements; (4) Focus on application layer (Indian language AI, healthcare, agriculture) where India has competitive advantage, not commodity infrastructure competing with China’s scale; (5) Accelerate workforce transition programs before job displacement hits BPO and IT services sectors. Morgan Stanley’s warning is to avoid overcommitting to infrastructure assumptions that may not hold, not to abandon AI investment entirely.